|

This is also a crucial factor when dealing with new data sets where users might know the contents as it will be ideal for exploratory analysis with wide-ranging data. Thus, as a general rule, a broader range of data is extracted to ensure that all the required data is collected. It might not be possible to pinpoint exact subsets of data depending on the source in a typical extraction phase. Sources can consist of any type of structured or unstructured data such as: There, the locations from where the raw data is extracted are known as sources or source locations. The basic workflow of the extraction process is to copy or export raw data from different locations and store them in a staging location for further processing.

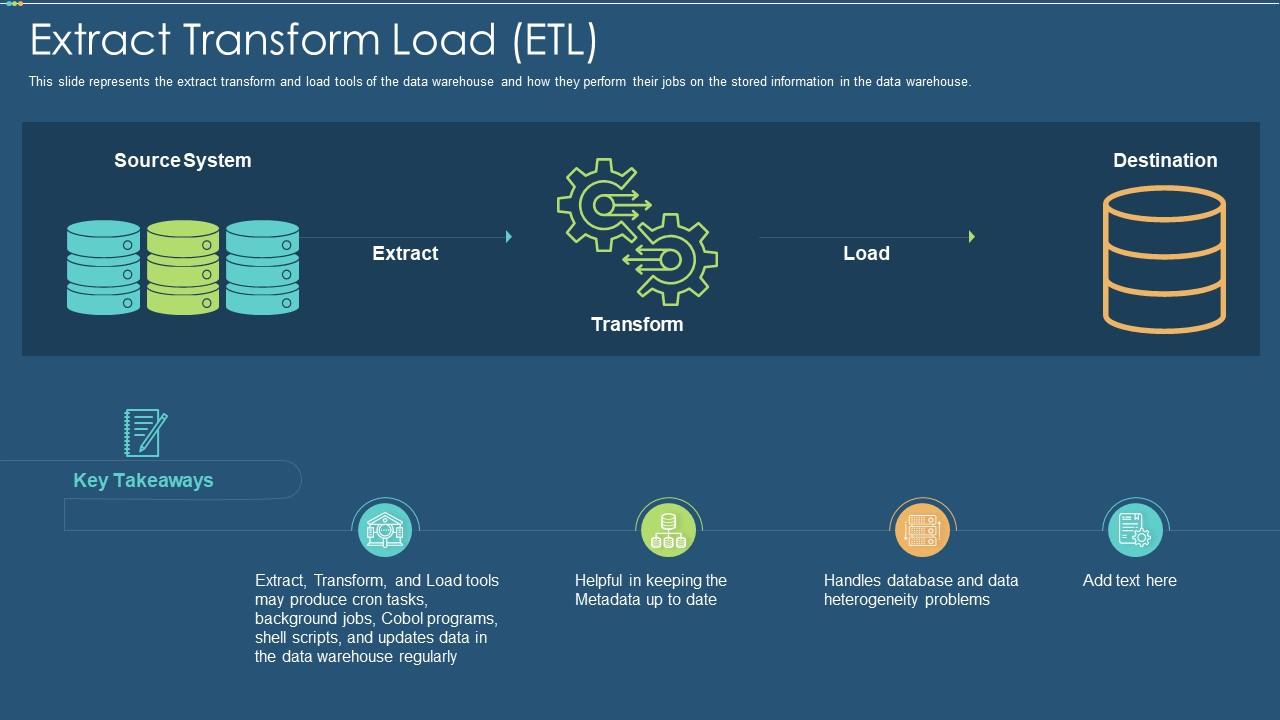

This is the first step of the ETL process. In this section, we will dive into the exact functionality of these components. Extract, transform & load basicsĮach of these components or tasks represents a separate function of an ETL pipeline. Let’s take a deeper look into ETL in this article. Streamline the reviewing process leading to better business decisions.Create a consolidated view of your data in various formats and multiple locations.The primary goals of adapting ETL in organizations are to: Thus, ELT has become an important factor in an organizational data strategy. However, ETL has now evolved to become the primary method for processing large amounts of data for data warehousing and data lake projects. With the growing popularity of databases in 1970, ETL was introduced as a process for loading data for computation and analysis. Short for extract, transform & load, ETL is the process of aggregating data from multiple different sources, transforming it to suit the business needs, and finally loading it to a specified destination (storage location). The importance of data has skyrocketed with the growing popularity and implementation of big data, analytics, and data sciences. All data-from simple application logs and system metrics to user data-are quantifiable data that can be used for data analytics. Automated Mainframe Intelligence (BMC AMI)ĭata is the key driving force in most applications today.Control-M Application Workflow Orchestration.Accelerate With a Self-Managing Mainframe.Apply Artificial Intelligence to IT (AIOps).Jiang.Ī link to the print version on the BeyeNetwork can be found here. They are also valid with regard to the so-called enterprise data integration in general”, concludes Dr.

“As a matter of fact, the validity of the discussion results obtained in this article is not limited only to data warehouses. In most cases, this placement decides the effectiveness of the resulting data warehouses.”īe sure to check out the summary by the good doctor of the four evolutional phases of data warehouse architecture discussed along with the five driving forces. In this article, he focus eson the placement and treatment evolution of three major functionalities within data warehouses, i.e., extracting data (E), transforming data (T) and loading data (L). Jiang’s most recent post is a great review of the “architectural evolution road that data warehouses generally have followed in the past and the possibilities leading to the future.

Bin Jiang a Distinguished Professor of a large university in China, and the author of the book Constructing Data Warehouses with Metadata-driven Generic Operators.ĭr. And one of their best blog experts is Dr. There are few better online sources than the BeyeNETWORK which focuses on business intelligence, performance management, data warehousing, data integration and data quality.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed